Collette Tauscher

Technology & Supply Chain Strategy Leader I Starbucks I Columbia Sportswear I Nike

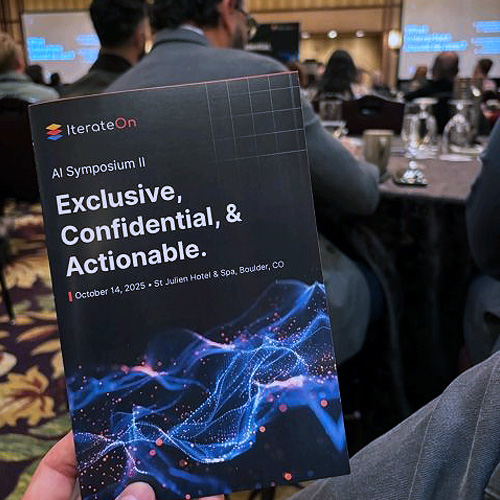

Reflecting on an inspiring week at the IterateOn AI Symposium in Boulder.

The intersection of AI and quantum computing isn't just theoretical anymore—it's happening now, and the pace is remarkable. What struck me most wasn't just the technology itself, but the caliber of minds working to shape its application.

We're entering an era where the tools we use to understand our world—how we map complex systems, manage operations, and measure outcomes—will fundamentally change. The leaders and builders I met this week are at the forefront of that transformation.

Grateful to the IterateOn team for creating space for these critical conversations.

Karla Arzola

CIO | Board Member | Strategist | Campbell County Health | HCA HealthOne | Swedish Medical Center

Reflections from the IterateOn Cross-Industry AI Symposium

Last week’s AI Symposium hosted by Iterate.ai brought together some of the sharpest minds driving real transformation with artificial intelligence, not just talking about it, but building and deploying it.

From healthcare to retail, manufacturing, and finance, one thing was clear: AI isn’t a future concept anymore, it’s an operational necessity.

Top takeaways that hit home for me:

- AI wins are now measured in ROI, not prototypes. The best case studies showed measurable cost reduction and productivity gains — and in healthcare, that translates directly to better patient outcomes.

- Cross-industry learning is where the real breakthroughs happen. Seeing how other sectors use AI to optimize operations, predict issues, and automate complexity gave me new ideas for healthcare applications.

- Governance and ethics are rising to the top of the AI maturity curve. The “move fast and break things” phase is over. We’re entering the “move smart and scale responsibly” era.

- A personal highlight? The demo on how AI agents helped uncover $14M in lost revenue in healthcare , a reminder that innovation and financial stewardship can (and should) go hand in hand.

Huge thanks to the Iterate.ai team for creating a no-fluff, high-value experience that focused on what’s real and working now!

Rory Reichelt

ISV Partnerships & Enterprise AI GTM | 2025 CRN People to Know Recipient | Intel

This is the kind of AI event we need more of...real conversations, real tech, no fluff. Love seeing the full GenAI stack come together to talk strategy and execution.

Vish Panchal

GTM, AI Appliances | ASA Computers

I got to see firsthand how AI is shifting from experimentation to execution.

The message to takeaway was AI is already solving real problems, from food insecurity to healthcare to creativity, but privacy and trust are key without them, innovation can’t grow.

The best companies are those moving fast, testing often, and learning every day.

Iterate brought together brilliant minds from Ulta Beauty, Adobe, Intel, Oracle, IBM, Dell, and many more, plus a State Senator, a Harvard professor, and AI model creators across industries to prove it.

We watched secure, on-prem AI can transform document retrieval and enterprise workflows.

From privacy-first infrastructures to agentic AI, every discussion reinforced one thing. Grateful to be part of such an inspiring event.

Robert Wamsley Ph.D.

Researcher | Leader | Quantum Rings

Yesterday, Quantum Rings was honored to speak at the IterateOn AI Conference in Boulder, CO—a leading event uniting top enterprise AI leaders nationwide.

Our team joined the "Quantum + AI: A Field Trip Into the Future" session at the Colorado Quantum Incubator. The session featured insightful talks from our own Bob Wold and Robert Wamsley, alongside our friends Eva Yao (CEO of FLARI Tech), Scott Sternberg (Executive Director of CuBits + Colorado Quantum Incubator), Wendy Lea (Board, Elevate Quantum), and Colorado state senator Mark Baisley.

The convergence of quantum computing and AI holds transformative potential. We’re thrilled to see some of the top leaders from the AI space looking for ways to drive innovation together.

Gurpreit Juneja

VP | CAIO | AI | Digital Transformation | Data Science | Visa | STACK Infrastructure

If AI were a power plant, IterateOn was the switchyard—high voltage, grounded lines, real load.

The number that stopped the room? $14M. Found by ONE revenue agent.

𝗧𝗛𝗘 $𝟭𝟰𝗠 𝗦𝗧𝗢𝗥𝗬

One revenue-integrity agent.

$14M in quiet leakage found.

No fanfare. Just math.

This is what happens when we stop building chatbots and start building systems that DO.

IterateOn—you built a builder's room, way to go!

Luis Duarte

Co-founder & CEO | Amoofy

Deeply inspired by the conversations at IterateON 2025.

A heartfelt thank you to the entire Iterate.ai team for hosting such a forward-thinking and generous gathering — a space where innovation, future-now efforts, and technology met.

I was struck by how often the topic of human stories surfaced throughout the sessions — a powerful reminder that even as AI, data, and automation evolve, the heartbeat of progress remains our ability to listen, connect, and make meaning together.

Special gratitude to Jonathan Greechan from Founder Institute for the insights and for always creating bridges that connect founders driven by both intellect and empathy.

And a big shoutout to Chris Byrne, former IP lawyer and venture partner at Samsung, who beautifully articulated that “the one thing that hasn’t changed in over 300,000 years is how humans codify information — in a story.”

Ganesh Harinath

Former Vice President & CTO @ Verizon Media | Founder & CEO at Fiducia

𝗧𝗵𝗲 𝗶𝗻𝘃𝗶𝘁𝗲-𝗼𝗻𝗹𝘆 𝗜𝘁𝗲𝗿𝗮𝘁𝗲𝗢𝗻 𝗦𝘆𝗺𝗽𝗼𝘀𝗶𝘂𝗺 𝗶𝗻 𝗕𝗼𝘂𝗹𝗱𝗲𝗿, 𝗖𝗼𝗹𝗼𝗿𝗮𝗱𝗼 𝘄𝗮𝘀 𝗼𝘂𝘁𝘀𝘁𝗮𝗻𝗱𝗶𝗻𝗴 — 𝗲𝗻𝘁𝗶𝗿𝗲𝗹𝘆 𝗳𝗼𝗰𝘂𝘀𝗲𝗱 𝗼𝗻 𝗿𝗲𝗮𝗹-𝘄𝗼𝗿𝗹𝗱 𝗔𝗜 𝘂𝘀𝗲 𝗰𝗮𝘀𝗲𝘀.

It was a wonderful opportunity to make new friends and connections, bringing together a diverse community of leaders and offering a clear view into how the next generation of intelligent and immersive experiences is rapidly taking shape....

Exceptional event.